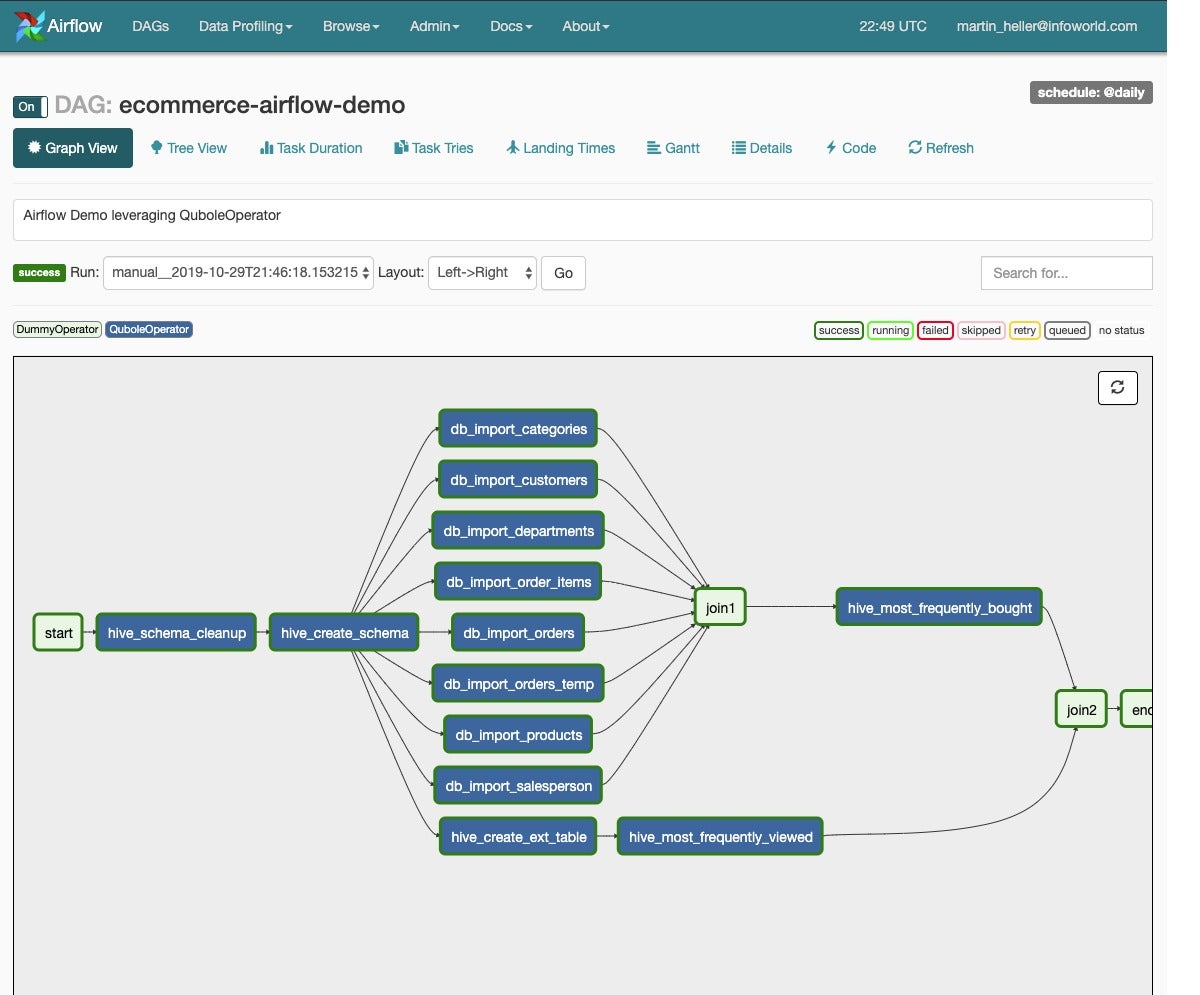

The main function of Airflow is its ability to schedule workflow runs. It’s called DAG because the series of tasks only ‘flow’ one direction (and it doesn’t double back on itself).Įach ‘step’ of the workflow is known as a taskĪn example of an Airflow DAG looks like this: In Airflow, the technical name for data workflows is Directed Acrylic Graphs (DAGs). Monitor the status of each step in the workflowĪirflow workflows are ‘DAGs’ and 100% code This includes managing dependencies and task monitoring/management through its web UI: So in this blog I’ll explore some best practices to hopefully make the Airflow experience less daunting for newcomers.Īirflow () is a leading Apache open-source data orchestration tool for running data workflows - used in many tech companies.įor example, Uber and Airflow’s original creator, Airbnb both use Airflow extensively.Īirflow provides an easy-to-use web UI, with integrated logging, monitoring, workflow run management, dependency handling. Due to its complex nature and inter-related steps, managing data workflows is often a daunting task. Machine Learning - training, evaluating and deploying a ML model.ĭata workflows range from simple to very complex, with hundreds of steps and branching/dependencies. Result = ti.task.execute(ti.What is a Data Workflow and why it is hard to manageĪ data workflow is a well-defined series of steps to accomplish a particular data-related task.ĭata pipelines - Extracting, Loading and Transforming (ELT) data from one data source to anotherĪnalytics Engineering - creating, calculating or building analytics or data warehouse models using SQL Ti = dagrun.get_task_instance(task_id=TEST_TASK_ID)

"""Tests that the EvenNumberCheckOperator returns True for 10."""ĭagrun = _dagrun(state=DagRunState.RUNNING,

Self.dag = DAG('test_dag4', default_args=) So when you upgraded to Airflow 2.2.3, the unit test requires you to create a dagrun before you create a test run.īelow is the sample code which worked for me: import unittestįrom import DagRunStateįrom import DagRunTypeįrom operators.test_operator import EvenNumberCheckOperatorĭEFAULT_DATE = pendulum.datetime(2022, 3, 4, tz='America/Toronto')Ĭlass TestEvenNumberCheckOperator(unittest.TestCase): The code have written is using Airflow 2.0 format of unit test. In case it helps, my current Airflow version is 2.2.3 and the structure of my project is: airflow Why can't Airflow locate the test_dag_data_cleaning DAG? Is there a specific configuration I've missed? Do I need to also create a DAG run instance or add the DAG to the dag bag manually if this test dag is outide of my standard DAG directory? All normal (non-test) dags in my dag dir run correctly. This is related to the line in which a task instance is instantiated in test_operator_cleans_dataset_which_matches_schema. However, when the tests are run, the following error is raised: : DagRun for 'test_dag_data_cleaning' with date 12:09:51.538954+00:00 not found Self.assertEqual(result, self.test_data_correct) Result: List = task.execute(ti.get_template_context()) Ti = TaskInstance(task=task, execution_date=DEFAULT_DATE) Task_id="test_operator_cleans_dataset_which_matches_schema",ĭata_file_object=deepcopy(self.test_data_correct), Verification: Returns the original dataset, unchanged. Test: Attempt to clean a dataset which matches the provided schema. # Test data set here as class variables such as self.test_data_correctĭef test_operator_cleans_dataset_which_matches_schema(self) -> None: Here is an example of one of the tests: DEFAULT_DATE = datetime.today()Ĭlass TestDataCleaningOperator(unittest.TestCase):Ĭlass to execute unit tests for the operator 'DataCleaningOperator'. However, I've updated the operator recently to take in a context (so that it can use xcom_push). The unit tests previously worked when I didn't have to instatiate a TaskInstance and provide the operator with a context. I've written a custom operator (DataCleaningOperator), which corrects JSON data based on a provided schema.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed